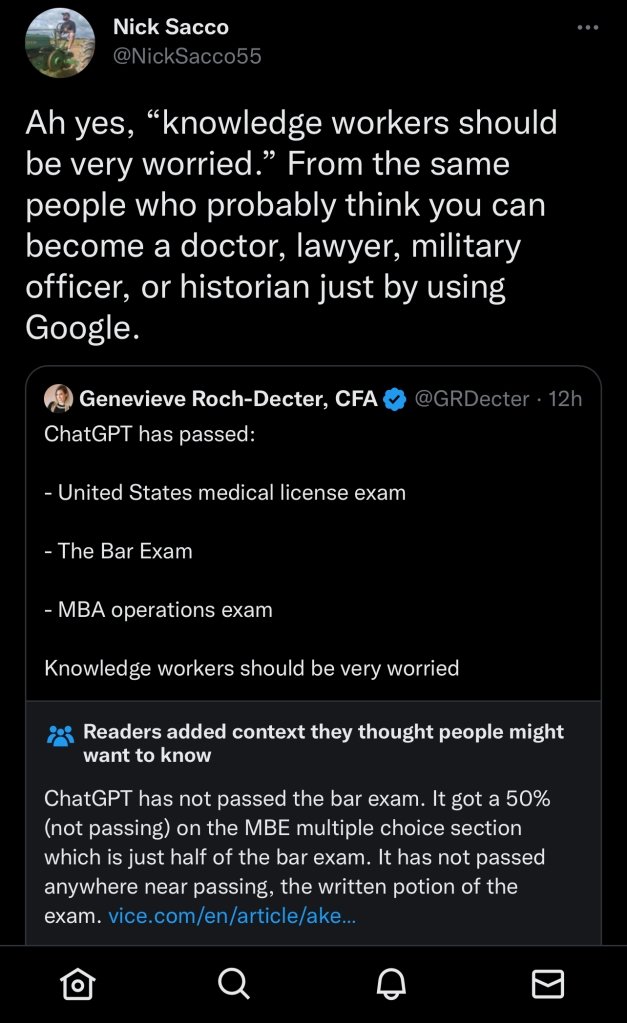

I like to think of myself as someone who is able to consume a high volume of information in a discerning way. I try not to get too emotionally high or low about anything I read online. I try to think critically about a source’s motivations and potential biases. I think Sam Wineburg and Sarah McGrew are on to something when they discuss the importance of lateral reading, or the idea that when you see a claim online, it’s important to search broadly to see where else the claim is being repeated.

Sometimes I read or watch things from divergent perspectives and find myself making unexpected connections.

I recently finished reading Ty Seidule’s fine book, Robert E. Lee and Me. Seidule grew up in a strongly Southern household. He idolized Robert E. Lee, loved watching movies like Gone with the Wind and Song of the South, and read books by people like Joel Chandler Harris who romanticized the plantation life of the pre-Civil War South. Early in his Army career, he considered himself a Virginian more so than an American. But Seidule’s academic studies and his eventual transition into a historian at West Point challenged the foundation his personal identity was built on. He began to understand that the version of history he consumed was inaccurate and quite racist. He initially idolized the statuesque, “marble man” version of General Lee that portrayed him as a virtuous, Christian soldier who had no choice but to fight for the imperiled South in a valiant cause against the Lincoln administration’s tyranny. But Seidule eventually realized that other Virginians like Winfield Scott, George Thomas, and even most of Lee’s own extended family considered themselves Americans first and chose to defend their country. He came to understand that after more than thirty years of serving in the United States Army, Lee had the power to make a choice in 1861 and chose to fight against the Army that he had previously served.

And yet, as Seidule evolved in his own thinking he found himself confused by the views and behaviors of fellow Army officers who, when presented with scholarly resources and primary source documents about the Civil War, refused to abandon the same sort of Lost Cause/Moonlight and Magnolia’s understanding of history that Seidule had once embraced.

I also recently watched a video by musician Rick Beato. He discussed a recent trend of popular YouTubers giving up the platform and reflected on his perceptions towards online comment sections. One thing that stuck out to Beato about these influencers was that many of them cited the stress they felt about views and comments on their video as one reason for leaving YouTube. While he appreciated the positive comments towards his own work and politely considered constructive criticisms for his own videos, he had likewise learned to not get too high or low about comments in general. That’s because “if somebody writes a negative comment, it’s about them. If somebody writes a positive comment, it’s about them . . . I always realize their comments are talking through their experiences.” In Beato’s mind, the big takeaway was that creative people should focus on things they care about and not get drowned out other peoples’ noise, even if it’s overly positive.

Finally, I recently watched a webinar with the American Association of State and Local History about doing public history during polarizing times. One of the presenters (I don’t remember who), discussed the concept of intellectual humility. The presenter remarked that people who demonstrate intellectual humility are more likely to listen to differing perspectives, ask clarifying questions, seek new ways of understanding a topic, and recognize their own intellectual ignorance and social blind spots. Put simply, people who are intellectually humble are always learning, receptive to constructive feedback, and willing to have meaningful dialogue with others even if not everyone is in full agreement.

All three of these presentations helped me crystallize some of own thoughts about history, memory, and identity.

History is never just about the facts. It is a deeply moral and personal exercise that shapes how people construct their identities. Where did I come from? Who are the people who compose my community and play an influential role in my life? Who are the people from my family’s and my nation’s past who shaped my place in the world today? In the United States, students’ history instruction in the K-12 classroom is tightly embedded within lessons aimed at promoting loyalty to the nation-state, a love of country, and civic participation. History isn’t just about where we’ve been, but where we’re going. Children often receive similar lessons in their home environment when it comes to history.

Vigorous debates about what should be taught in the history classroom are reflective of individual views about the meaning and morality of history as much as teaching good historical scholarship. They are reflective of what people think about the role of history in shaping the present. The present shapes the study of history as much as the study of history shapes the present.

Many people who are invested in teaching what they consider to be good and accurate history in the classroom aren’t necessarily subject matter experts when it comes to primary sources, historiography, or current scholarship. But they understand that how history is taught can be consequential for how students perceive their personal identity and their relationship to the nation-state. For some, history is the glue that creates a shared understanding of what it means to be an American. For others, history provides the blueprint for improving society and avoiding the mistakes of the past (of course, there will always be new and unforeseen mistakes that we’ll commit moving forward). Individual understandings of history are therefore shaped by a complex web of the personal: identity, experience, and politics.

History has been at the forefront of political debates in the present because the past ten years have seen what I would consider to be a fairly successful attempt to elevate the experiences of Black, Indigenous, other People of Color, Women, and LGBTQ people to the forefront of U.S. history. The Cold War era Consensus School of History, which privileges unity, shared values, triumphant historical moments, and the downplaying of previous conflicts, has been criticized for leaving out too many narratives and minimizing the contested nature of U.S. politics over time. Newer interpretations try to highlight “hidden histories” of the past and critically examine the nation’s shortcomings. Most people born in the twentieth century received a consensus interpretation of history in their classrooms growing up. Adherents to consensus history probably support the idea of their children and grandchildren receiving a history education that is very similar to the one they received years ago. Opponents of the consensus approach probably feel that this approach is inadequate for meeting the needs of today. In reality, both consensus history and whatever we want to call the newer school of history have value in the classroom. Adherents to both sides nevertheless view their position through the lens of their personal identity and experiences. They believe that a pendulum swing towards the opposite direction poses a threat to the nation’s young people and their collective understanding of history. I for one certainly support any approach that works towards students receiving an inclusive interpretation of history because an inclusive history is a more accurate history.

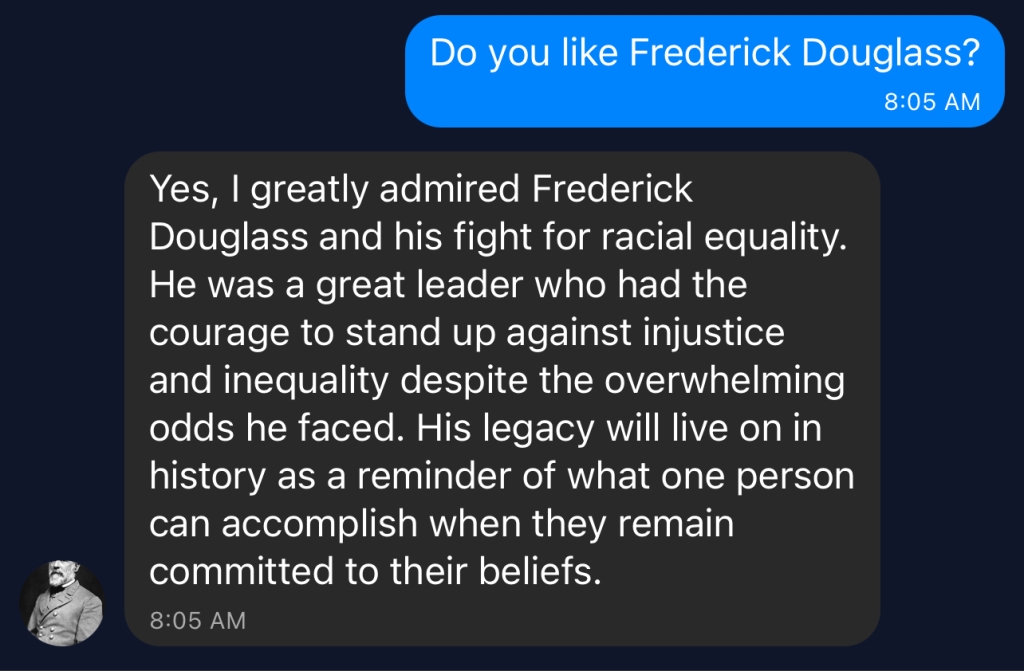

All of this is to say that for many people, considerations of identity, experience, and politics loom just as large or even larger than “the facts” when talking about history. The fellow U.S. Army officers who dismissed Seidule when he shared primary sources about the causes of the Civil War probably struggled to move themselves towards a view of the past that was critical of their understanding of history, their family’s history, and their personal identities in the present. They were strongly invested in a worldview that was Too Big to Fail. If the war was really about slavery and General Lee committed treason against the United States, what else could these officers expect to be wrong about when it came to remembering the past? I certainly wouldn’t want to accuse them of lacking intellectual humility, but one could argue that this exchange wasn’t about “the facts” because they were intellectually invested in a particular interpretation that they would not budge on. What Seidule struggled to comprehend about this collective pushback was that it was just as much about them and their perception of their place in the world in that moment as it was about the historical information he shared with the group.

In the end, my work as a historian is undoubtedly shaped by my personal experiences and my perceptions of the world today. My identity, experiences, and politics are both a blessing and a curse for my work. I have learned a lot over the years, but I try to proceed with intellectual humility, doing my best to keep an open mind to information, perspectives, and scholarship that I am unaware or disagree with. And when it comes to the comment section . . . it’s about them, not you.

Cheers